Online video-game matchmaking, adapting to change in data distribution, and model explainability

PandaScore Research Insights #3

Beyond skill rating: Advanced Matchmaking in Ghost Recon Online

What it is about: This paper, published in 2012, provides a balanced matchmaking algorithm, BalanceNet, that takes into account player in-game statistics. It also uses a proxy for the player fun to power FunNet, a matchmaking algorithm that maximizes player fun. Models are evaluated with the online first-person shooter video-game Ghost Recon Online.

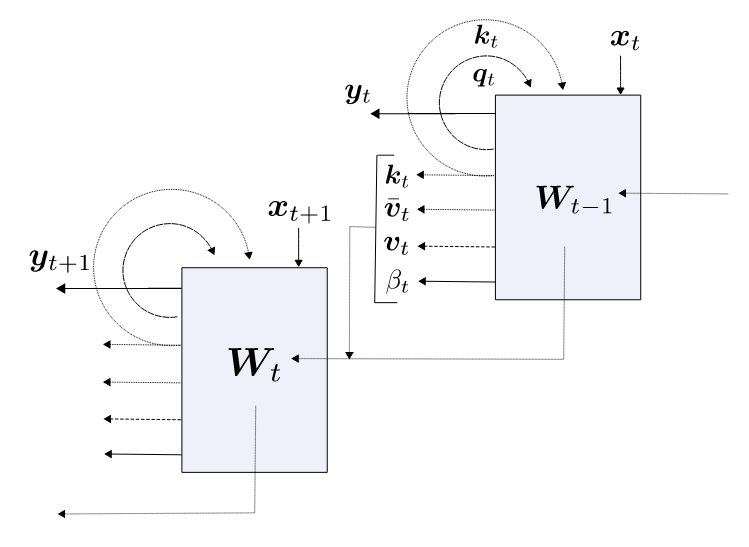

How it works: The input data is made of in-game statistics for the past games of each player. Both architectures, BalanceNet and FunNet, have the following inputs:

Embeddings, which summarizes the past matches (e.g., “player is a good sniper”)

Attributes, which are the player in-game statistics (e.g., kill/death ratio)

The embeddings and attributes are iteratively combined at the player, then team, and then game level.

FunNet is a variation of BalanceNet that predicts the probability of a given player to have fun, instead of the probability for his team to win. Because the true label is gathered through in-game surveys unavailable at the time, the authors used a proxy for player fun. This proxy is a mix of player-level metrics (e.g., average life span), team-based metrics (e.g., kill/death ratio) and global metrics (e.g., game length). These metrics represent domain expert prior knowledge.

Results: The industry standard TrueSkill achieves 78.5% accuracy while BalanceNet reaches 80.8% accuracy. This was expected as TrueSkill does not take into account player in-game statistics, and thus converge more slowly.

The authors confirm that FunNet is better at selecting out fun matches than balanced matchmaking algorithms, but that is on their own proxy for fun. The authors state that they need real player feedback to state how much of an improvement this algorithm provides.

Why it matters: Player enjoyment is hard to satisfy in competitive environments due to the presence of other players. Typical matchmaking assumes that balance is the only criterion that matters for players. But using more detailed inputs such as player in-game statistics allow not only to have better balance, but also to increase the fun score.

Our takeaways: Using finer data about players allows higher quality predictions. With the advent of quality play-by-play data, it is possible to make better predictions than standard models (i.e., win probability), but also predictions on metrics that were simply not possible to estimate before (e.g., fun). This widens up the field of what we can compute and gives us ways to innovate in the field of esports.

Also, we note that, when training their model, as team features should be independent of the order of the players, the authors did feature mixing that respect this independence. It was done in the same way as the seminal paper “Deep Sets” (2017), five years before the formal proof of the theoretical framework!

A Modern Self-Referential Weight Matrix That Learns to Modify Itself

What it is about: This paper, published in 2022, focuses on a new way for models to self-improve by adapting to changing data distributions in incoming input streams.

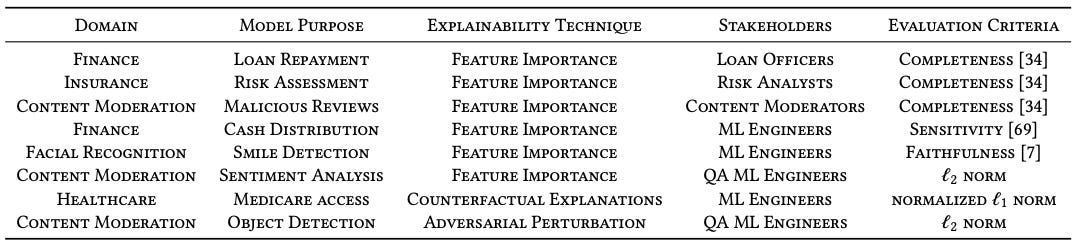

How it works: Their proposed approach is based on Fast Weight Programmers (FWP). FWP have two neural networks, called the “slow” network and the “fast” network. The “slow” network learns to program the “fast” network through its weights. Here, instead, the authors use a single model that updates its weights time-step after time-step, adapting them to the current data stream distribution. In this setting, the model is trained with gradient descent only once, when it learns its initial weights.

Why it matters: Recently the same authors showed that the attention layer of Linear Transformers behaves similarly to FWP. As transformers are at the forefront of many improvements in deep learning applications, it is paramount to understand them better. Dealing with data drift (when the distribution of data is different between when it is trained and when it used in production) is far from easy. You need to spot it and then act on it by retraining the model on the new data. This is often costly, especially in cases where this process needs to be done manually. This paper explores an area of research that enables online updates to a running model.

Our takeaways: The paper shows a legitimate way to have an updating model with a changing input data distribution. In esports, it is a common occurrence that a game update would change the way teams play and thus would also effect the data distribution given to the model. In this regard, such approach could help adapting to these kind of changes. That being said, we think that the proposed architecture might be susceptible to catastrophic forgetting. This might not be an issue for all the domains, especially if the drift in the data distribution is subtle and happens over a long period of time. However, there are some domains that can experience sudden and cyclical shifts that would make such model have a hard time dealing with it.

Explainable Machine Learning in Deployment

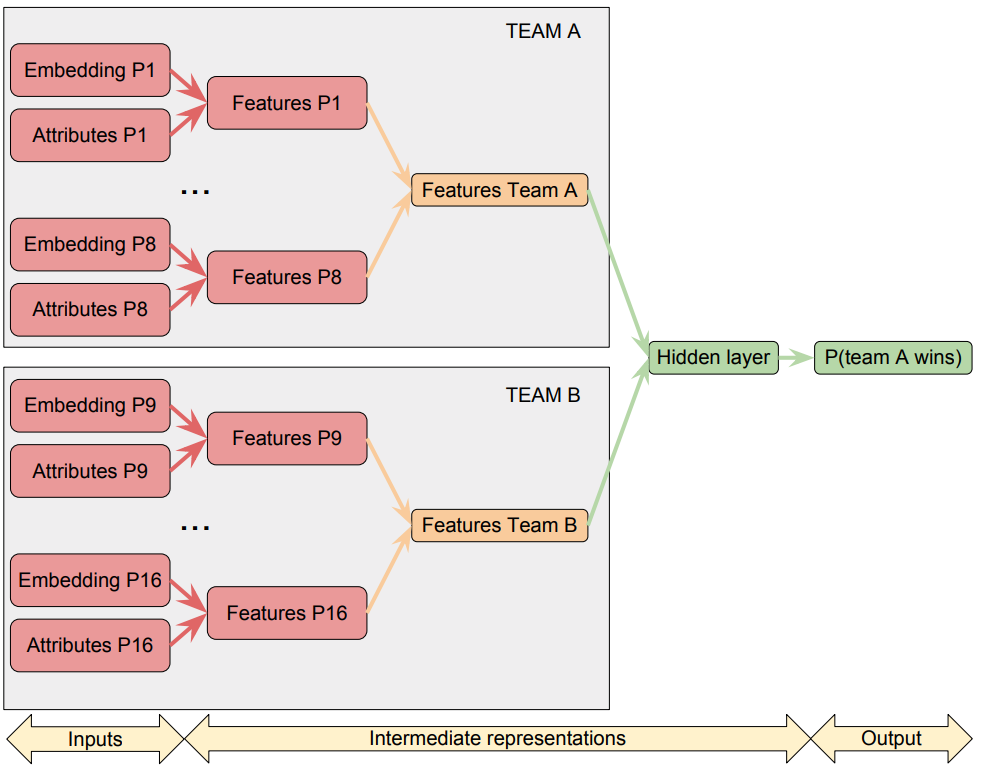

What it is about: This paper, written by researchers from Baidu and IBM with support from DeepMind, was published in 2020. Its goal is to find how organizations use explainable machine learning in deployment.

How it works: They interviewed two groups. The first group was made of people not working with explainability tools. With this group, they aimed at discovering their expectations regarding explainability. The other group was composed of data scientists working with explainability tools. With them, they intend to explore their practical usage of such tools.

What they found out: Explainable machine learning is important to debug and monitor the model, but also to provide transparency about it:

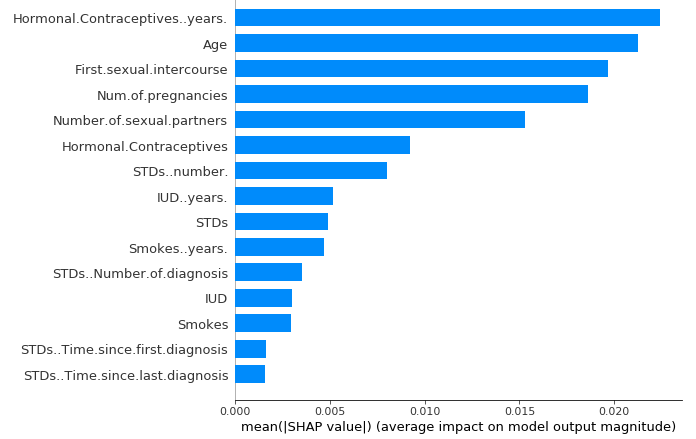

The group that does not use explainability tools sees them as ways to audit and communicate about models, providing concrete information. For the end-user, it helps him understand the decision of the model. For the data scientist, it helps him validate the model’s behavior. For example, for someone asking for a loan, you can know which feature had the most impact on the rejection of the loan.

By looking at the group that does use explainability tools, researchers noticed that explanations tend to stay in the hands of data scientists. For them, explainability is rarely used to explain what happens inside a “black-box” model to the end-user. Out of several explainability techniques, the feature importance was the most used.

Thanks to the interviews, they established the following guidelines. First, identify the stakeholders. As they will be the consumer of the explanations, you need to understand their needs. Then engage with them, in order to know what type of explanation they need, the frequency and what they intend to do with them.

Why it matters: Having explainability tools is important, benefiting both data scientists and end-users. The guidelines this paper give can impact the way we explain machine learning today. For data scientists, explainability tools result in models that are easier to understand, compare, and validate. For end-users, those tools provide transparency and understandability.

Our takeaways: Explainable machine learning is key at PandaScore: our esports traders work closely with machine learning models provided by the data science team. For data scientists, exposing the logic of the models and confronting it with the expertise of the traders helps spotting unexpected model behaviours. For traders, having a better understanding of the models helps them in their daily operations. This collaboration is key for us to have the best odds possible in the industry.